LLM Zoomcamp: Use zrok to access ElasticSearch container from anywhere

What's this?

This is a supplement for Module 2 of the LLM Zoomcamp from DataTalks.Club. It's about how to use an open-source tunneling tool caled zrok to make your ElasticSearch container accessible via public Internet, so that it can be used for RAG when running model on GPU environment that does not support running Docker container like Google Colab.

Why this?

For module 2 of the course, we will explore open-source models to replace the API service in the Generation part of Retrieval-Augmented Generation. To run these open-source models, a GPU is generally required1. But in common free GPU environments such as Kaggle or Google Colab, we cannot run the ElasticSearch container as the search engine for our RAG app.

The lectures for module 2 uses a nicely implemented minimal document store by Alexey called minsearch.py, which for all purpose of the course is enough. However, I want to keep using ElasticSearch container, partly because it will be more realistic in actual production2, and partly because it will be cool as heck.

Introduction

Let's use Colab GPU environment to run open-source models. We cannot run any Docker container there, so we will need to run it somewhere e.g., in the GitHub Codespace that we used for module 1. Now, the ElasticSearch container port 9200 inside Codespace will be accessible in the Codespace network at 9200 because we map it that way in the command.

However, GitHub Codespace local network is not exposed by default for security reason. You do not have an URL like https://www.elastic.co/elasticsearch that you can use in Colab to access this container inside your Codespace3. That's when we can use zrok.

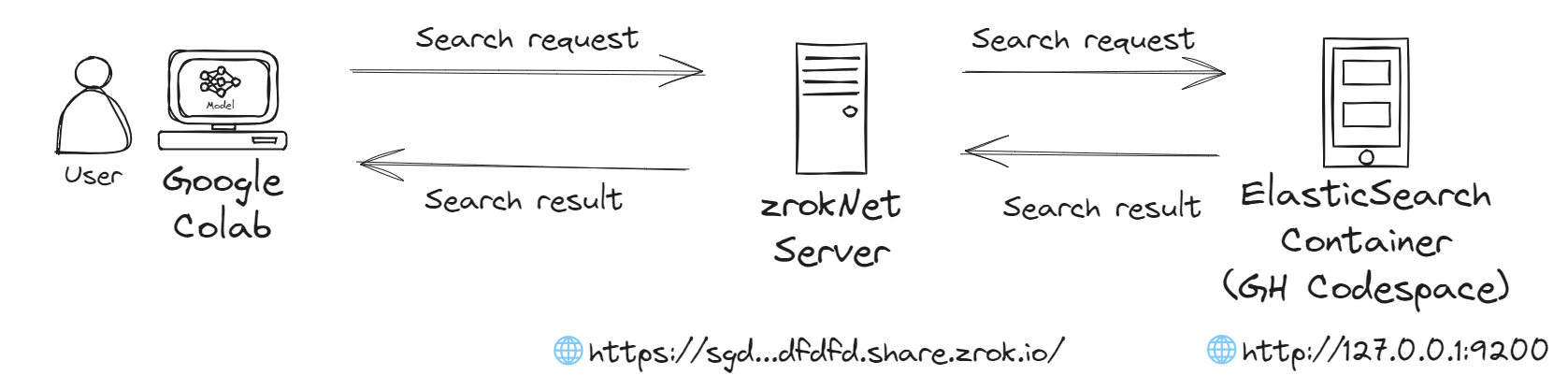

zrok is an open-source service that allows you to create a tunnel between a server on a private network and another private network or the public Internet. So our system becomes like this, with another machine managing communication between our Colab instance and our ElasticSearch container.

zrok can be self-hosted, or used as a webservice called zrokNet with generous free tier4. This tutorial uses the web service for convenience.

zrok can be self-hosted, or used as a webservice called zrokNet with generous free tier4. This tutorial uses the web service for convenience.

Next, I will walk you through

- How to install zrok on the device where you run your ElasticSearch container.

- Running zrok to share the service.

- Limitations of

zrok.

Install zrok

GitHub Codespace is a Linux environment, so we use the Linux guide. Sadly, the script on the website does not work for Codespace, so we will manually install. The steps in the website work, with some modifications due to Codespace configurations.

First, open a shell inside Codespace. Due to configuration, the Shell will point to the repo folder inside workspace folder, while the guide assume we are at the root folder. To go to the root folder, we need to go back 2 level.

root/

├─ workspace/

│ ├─ llm/

├─ bin/

├─ tmp/

It can be done easily with

cd ../..

ls -la

We should see that we are at the root folder, with the tmp folder where are supposed to put the tarball of zrok in.

Now head to release page of zrok and get the link to the latest version on Linux AMD64. For example, the link for v0.4.32 is https://github.com/openziti/zrok/releases/download/v0.4.32/zrok_0.4.32_linux_amd64.tar.gz.

Now head to release page of zrok and get the link to the latest version on Linux AMD64. For example, the link for v0.4.32 is https://github.com/openziti/zrok/releases/download/v0.4.32/zrok_0.4.32_linux_amd64.tar.gz.

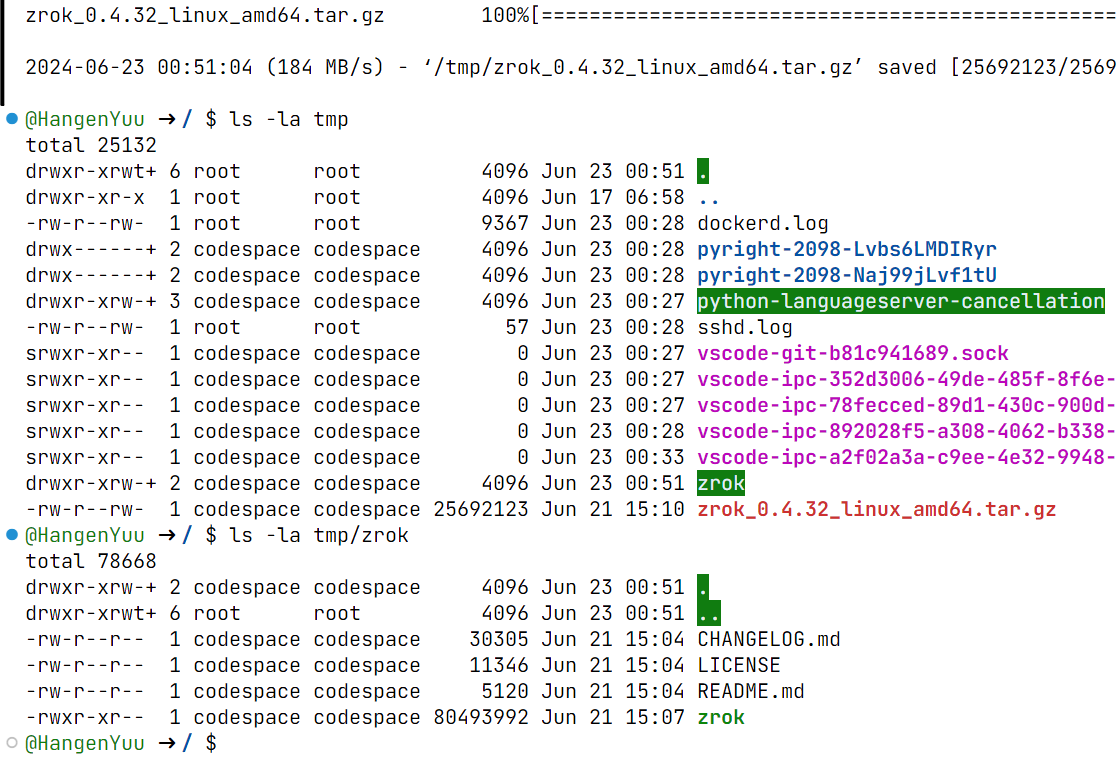

Run the command to create a new folder zrok in tmp, download the tarball, and untar it into binary package for installation

mkdir /tmp/zrok && wget -P /tmp/ https://github.com/openziti/zrok/releases/download/v0.4.32/zrok_0.4.32_linux_amd64.tar.gz && tar -xf ./tmp/zrok_0.4.32_linux_amd64.tar.gz -C /tmp/zrok

If the command run successfully (end with /tmp/zrok_0.4.32_linux_amd64.tar.gz saved, you should see the tarball and zrok folder inside tmp, and another zrok folder inside tmp/zrok folder.

We now install

We now install zrok library and CLI.

mkdir -p ~/bin && install /tmp/zrok/zrok ~/bin/

We need to add ~/bin as an executable path for shell. We also want to persist the change, so we will add it to the ~/.bashrc configuration file.

echo 'export PATH="$HOME/bin:$PATH"' >> ~/.bashrc

source ~/.bashrc

The command only persists the change for

bashshell. If you usefishorzsh, you will need to modify the command yourself. For example, forzsh:echo 'export PATH="$HOME/bin:$PATH"' >> ~/.zshrc source ~/.zshrc

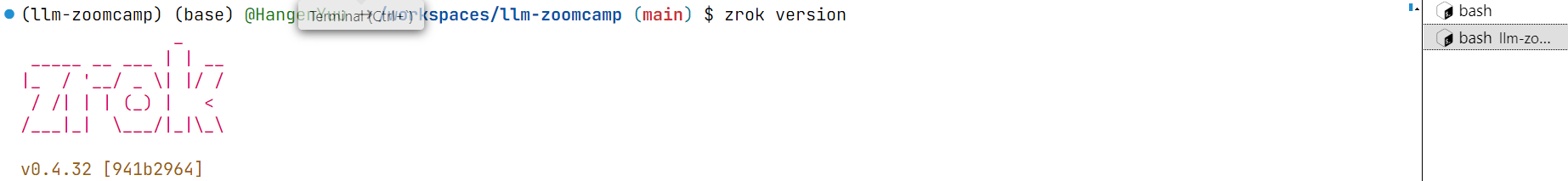

Open a new shell to test by running zrok version. We should see the version that we just install.

Using zrok

We install zrok on our server. Now, we need to sign up for zrokNet by following this guide.

I have done, so I will head straight to enabling zrok environment with zrok enable.

Follow the guide above. I don't need to repeat them.

Now, we can run the ElasticSearch container

docker run --detach\

--rm \

--name elasticsearch \

-m 4GB \

-p 9200:9200 \

-p 9300:9300 \

-e "discovery.type=single-node" \

-e "xpack.security.enabled=false" \

docker.elastic.co/elasticsearch/elasticsearch:8.4.3

I prefer

--detachmode and add a memory limit to the ElasticSearch container so that it does not terminates suddely on GitHub Codespace due to RAM limit.

then share the service with

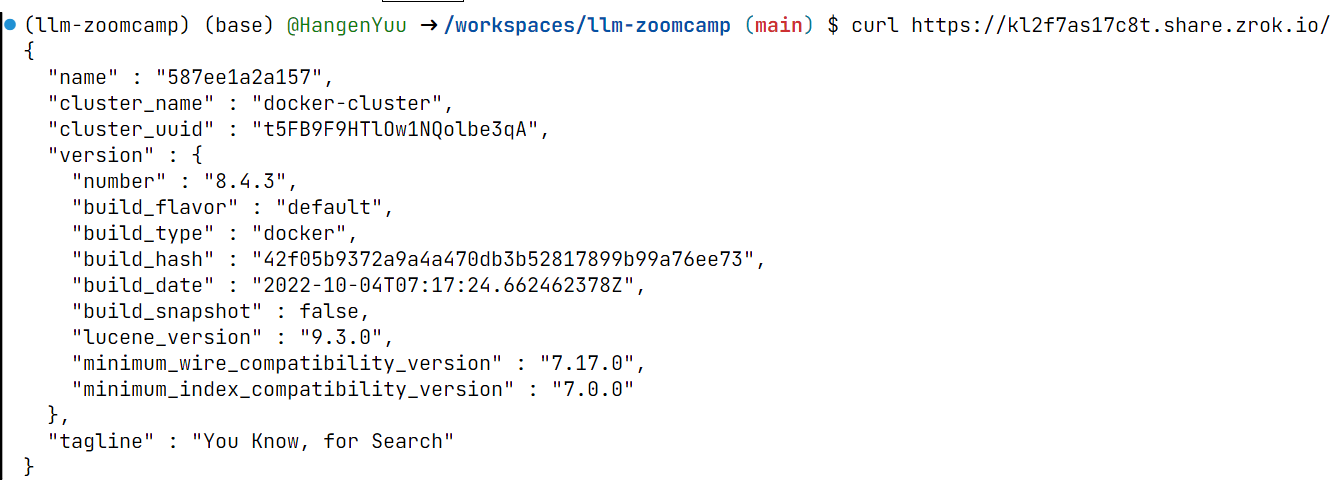

zrok share public http://127.0.0.1:9200

You should see the link in your shell, which now looks like this.

curl the link should give the exact output as curl http://127.0.0.1:9200. But unlike the localhost link, this link is public, so you can access it within Google Colab or anywhere else.

Limitations

While creating the index and search has no issue and negligible latency, the indexing process is both slower and yields error with 5 documents.

It's better if we do the indexing using the localhost network of where we run our ElasticSearch container instead.

It's better if we do the indexing using the localhost network of where we run our ElasticSearch container instead.

In actual production, we would not expose our search engine publicly like this, but use a private network to our generation service.

zroksupports this withzrok share privatecommand, but it requireszrokto be installed in the other machine. Technically, Google Colab is still just a Linux machine so I can follow the exact steps to installzrok, but I have not tried so before writing this guide 😅. I will update this later.From the guide,

zrokcan be run as a container on the machine itself with commanddocker run --detach\ --rm \ --network=host \ --volume ~/.zrok:/.zrok \ --user "${UID}" \ openziti/zrok share public \ --headless \ http://127.0.0.1:9200

but on its own it's less convenient than just

zrok share publicand I did not quite getdocker-compose.ymlto work yet.

Conclusion

That's all for this guide! I hope it was helpful for you 😄.

Ollama (also introduced in the lesson by Alexey) or llama.cpp (Python binding) might be what you need if you really cannot get a GPU.↩

Though we will see I do many things that are convenient but unfit for production. I will point them out.↩

Also true if you run your ElasticSearch container in your PC or a VM from any cloud vendor.↩

This web page also explains more what zrok can do. "zrok establishes secure connections from your local environment to a public endpoint, allowing others to access your local service through a public URL. This can be a web application, a development server, or other locally running services."↩